Don't Start With AI. Start With A Problem.

Most AI pilots fail not because technology underdelivers, but because the wrong use case was chosen before the technology ever had a chance. Here's the framework for getting it right.

The Sapien Team

Sapien

Don't Start With AI. Start With A Problem.

Most AI pilots fail not because technology underdelivers, but because the wrong use case was chosen before the technology ever had a chance.

Finance and operations teams often enter AI implementations with a mandate before they have a problem. They're reacting to a board pushing for an immediate AI strategy or a competitor announcing a deployment. So they adopt a selection process that starts with the solution and works backwards. The difference between implementations that drive measurable business impact and ones that get abandoned usually comes down to one decision made before any contract is signed: what problem are you actually trying to solve?

There's an important difference between looking for AI to solve your problems and looking for problems to solve with AI. The first approach starts with a technology and hunts for places to apply it. The second starts with your business and asks where visibility is missing, where decisions are being made too slowly, or where an entire category of analysis has never been possible because the effort required made it impractical.

Companies operating under board mandates tend to fall into the first camp. The pressure to demonstrate adoption leads teams toward whatever use case has the most immediate, demonstrable ROI: a contract analysis tool, an automated variance report, a dashboard that replaces a manual spreadsheet. These are important use cases where teams can find value. But they don't build towards a complete AI strategy.

The companies that get the most out of AI start differently. They come in with a short list: three to five areas where they know they lack visibility, where if they had better information, they would run the business differently. That clarity changes what they ask for, what they evaluate, and what they end up building.

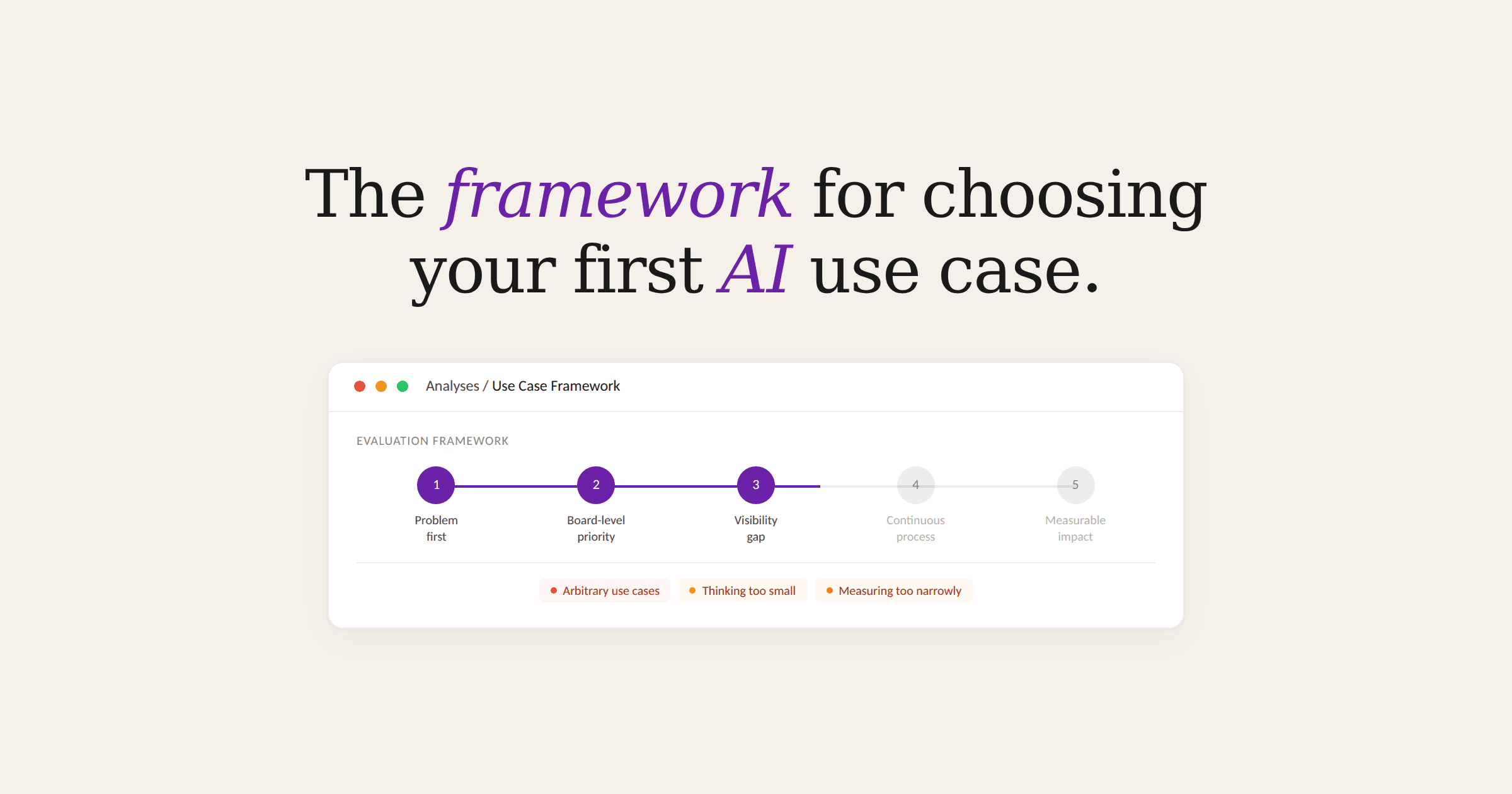

The Three Mistakes That Follow

When teams don't start with that short list, three patterns tend to emerge.

Grasping at arbitrary use cases. Without a clear view of where the business actually has gaps, teams default to whatever is most visible or easiest to pitch internally. The use case gets chosen because it was in front of someone, not because it addresses a meaningful problem. These implementations tend to produce marginal efficiency gains that are hard to defend at the next budget review.

Thinking too small. Teams identify a real problem but scope the solution conservatively based on what they think is technically possible. They send summarized data instead of raw transaction records. They ask AI to replicate an existing report faster instead of asking what the ideal workflow would look like if data were never the constraint. One manufacturer working through a tariff-driven margin problem initially proposed a reporting solution that addressed the symptom. The real opportunity was a proactive pricing workflow that would have changed how they made decisions entirely. They almost missed it because they scoped to what felt achievable rather than what was actually needed.

Measuring too narrowly. Teams measure success purely in hours saved. Time savings are real and worth capturing, but they are the floor of what AI can deliver, not the ceiling. The more significant value comes from analyses that were never previously considered feasible: visibility that doesn't presently exist, questions that can't be asked, decisions that are made on instinct because assembling the underlying data would have taken weeks. When a process goes from being run monthly to operating daily, the value is that teams can make more significantly informed decisions than before.

The Framework

Once you have your short list, four questions help determine whether a specific use case is worth pursuing.

Does it tie to a board-level priority? Not just something the finance team cares about, but something that connects to how the business is actually being evaluated. If it doesn't show up in your board materials or your operating targets, it will be difficult to justify and harder to expand.

Do you currently lack visibility into this area, or does getting that visibility require significant manual effort? The key is that AI is augmenting your team's ability to see critical information they either can't see at all or can only see after days of work.

Is the use case continuous? One-off analyses have value, but the compounding returns come from processes that run repeatedly. Something your team does monthly becomes something the business can act on daily. That compression changes the quality of the decisions being made, not just the speed.

Is the impact measurable? You need to be able to answer for what this use case added to the bottom line. If the use case is so diffuse that you can't point to a specific business outcome, it will be difficult to build on, regardless of how useful it feels in practice.

Who This Looks Different For

Industry context shapes which use cases tend to score well on this framework. A mid-market manufacturer optimizing for EBITDA will find the most leverage in use cases tied to product profitability, cost drivers, and margin at the SKU level. A scaling software business focused on a revenue multiple will prioritize visibility into unit economics or retention patterns. The framework is the same but the answers look different.

How to Build Your Short List

One question tends to unlock it:

If you could have proactive visibility into any part of your business, what would it be, and what would it change?

This framing pulls people out of the constraints they've internalized from years of working with rigid systems and asks them to think about what they would actually want, not what they've learned to settle for. The answers are usually immediate, specific, and pointed directly at the problems that carry the most business weight.

Where are you making decisions today without the information you'd want? What analyses does your team consistently deprioritize because the effort required isn't worth it for a monthly cadence, but would be valuable if they could be done continuously? Where does your business have a lever it can't currently pull because the underlying visibility doesn't exist?

The Foundation Matters

Your first use case needs to deliver ROI, but it also needs to be worth building on. The companies that get the most out of AI over time are the ones that picked a starting point that built real understanding of their data, their definitions, and their priorities — and then used that foundation to expand. The first use case is the proof of concept, but it's also the foundation for everything that follows.

Pick a use case based on your most pressing business problems, not on preconceptions of AI's limits. The technology will meet you there.

— The Sapien Team